This sample project demonstrates how to use Step Functions to pre-process data with AWS Lambda & store in S3, train a ML model & implement batch transformation through Sagemaker. Deploying this sample project will create an AWS Step Functions state machine, a Lambda function, S3 bucket along with required IAM roles & Log Group.

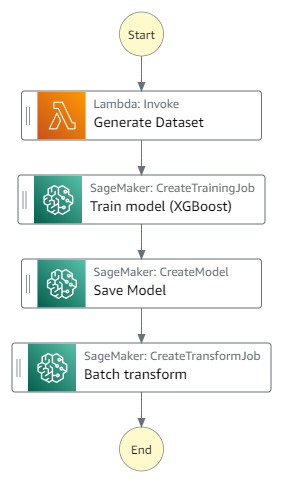

Below are the various stages of the Step Functionsworkflow and how it orchestrates the various steps for training an machine learning model in Sagemaker.

a. The first stage of the Step Functionsworkflow calls a Lambda function which generates data & processes it to create a train-test split dataset. These train & test data is places in the form of csv file in the S3 bucket.

b. In the second stage, using SageMaker service integration, Step Functionsstarts a Sagemaker Training job to create a logistic regression ML model for the given train dataset using XGBoost to predict the value.

c. Once the model is trained, it is saved to s3 bucket using Sagemaker model job in the third stage of the state machine run.

d. In the last stage, the test data is run through a batch transformation using Sagemaker transform job and the output file is places in the S3 output location.

< Back to all workflows

Clone repo

git clone https://github.com/aws-samples/step-functions-workflows-collection/tree/main/train-ml-model/cd step-functions-workflows-collection/train-ml-model

Deploy

sam deploy -guided

Testing

See the GitHub repo for detailed testing instructions.

Cleanup

1. Delete the stack:

sam delete.